Apache Hadoop is a software-based library that refers to a framework that uses basic programming principles to enable the distributed processing of massive data volumes over multiple machines. This is built to expand from one central server to many computers, each with its capabilities for storing and computation. Reach us to know more about interesting Hadoop Project Topics. Instead of relying on infrastructure to provide network connectivity, the library has been developed to examine and tackle issues at the application level, allowing a massively scalable operation to be delivered on top of a network of machines that have got huge chances to perish.

This article will provide you with a detailed picture of Hadoop projects where we are explaining all the aspects needed for Hadoop research.

The major task of experts at Hadoop project topics has been to determine the void in the field knowledge and provide enough opportunities for innovative research, and highlight initiatives related to Apache Hadoop and its infrastructure by identifying the important themes under discussion. Let us first start by discussing the Hadoop modules,

What are the Modules of Hadoop?

The following are the important modules in the Hadoop projects framework

- Hadoop Distributed File System (HDFS)

- The files being distributed among the nodes in different clusters

- Hadoop MapReduce

- It is used to handle a large amount of data processing applications

- Hadoop YARN

- It is a computational resource management platform

You might have already been very well versed in these modules of Hadoop about which you can also get a very good picture by looking into the real-time successful Hadoop projects from our website. You can get in touch with our experts for any kind of research assistance and queries in all Hadoop project topics. We can provide you with complete and all-encompassing project support. We are available to assist you at all hours of the day and night. Let us now look into the working of the Hadoop framework

How Hadoop works?

- Hadoop’s key attributes are computation as well as memory.

- The computational capacity is provided by MapReduce, while the storage capability is provided by HDFS, also multiple stores are enabled.

- In HDFS, segment by nature and also the block size can be configured.

- Throughout the Linux operating system, a frame is saved as a file.

- A Hadoop cluster potentially holds an arbitrary big file, using those fundamental blocks distributed all over the clusters.

- If you attempt to save a document to HDFS, then this will partition your file into blocks and maintain them.

- The input file description and name are specified while you execute your MR task.

- To process every block, the structure shall detect the position of all blocks which include that file and instantly process the map task.

- By optimizing the data location, the cluster node storing the block to be examined would execute at or in the same vicinity as every map task.

Hadoop has grown in importance in this internet age as a result of these distinguishing characteristics. Let us now talk about Hadoop’s numerical characteristics.

Numerical Features of Hadoop

- Adobe can handle more than four thousand machines in clusters amounting to more than twenty

- The largest cluster consists of more than four thousand machines

- The total number of users amount to about one thousand

- The framework is capable of creating more than one lakh jobs in a month

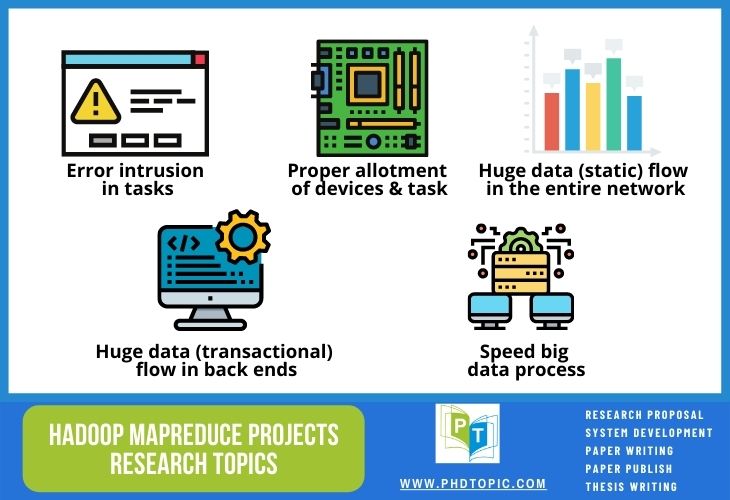

Choosing interesting Hadoop project topics will improve your professional image and accomplishment. Get expert help from engineers and developers who have earned world-class certification by contacting us. What are the challenges in Hadoop?

Hadoop Challenges

The following is a list of important problems which are most recent and require a lot of research

- Data consistency – data warehousing is highly important to conserve a huge amount of data which is one of the important fields being studied

- Fault tolerance – protecting crucial information even in case of nodes getting down

- Scalability – ensuring linearity with considerable coefficient using advanced architecture

- Pipeline efficiency – performance of the pipeline in case of stream processing architecture under multiple purposes

Apart from these challenges and concerns we also need to look into all of the following particulars in the case of Hadoop projects

- Essentially data parallelism is assumed to be in built-in Adobe for it is highly optimized for processing large scale data and suitable for advanced and shared-nothing computations

- Iterative learning algorithms which result in huge overhead concerning one iteration

- Scanning similar data multiple Times

- Increased input and output overhead while reading the data into mappers in an iteration

- At times a static data is also read into the mappers for every iteration, for instance, the data being input using k-means clustering

- Necessity for a separate controller

- MapReduce job co-ordination and computation performance enhancement among different iterations

- Stopping criterion can be measured and implemented

- Several task initialization overhead have to be incurred by configuring mappers and reducers tasks for a particular iteration

- Blocking Framework which leads to idle reducers until the completion of all map jobs

- Transferring and shuffling the data among mappers and reducers by an intermediary data transfer using index and data files (local discs) which are in turn pulled using reducers

- Shared document node availability by waiting for the nodes at reducers and mappers to be available for every iteration in a cluster of shared computation network

Our professionals have dealt with such a wide range of difficult Hadoop algorithm research problems and produced appropriate and innovative solutions. Check out our website for the top 10 Hadoop project topics, as well as the methodologies, techniques, and processes associated.

We guarantee to give you world-class expert project advice by utilizing vast research resources that are both legitimate and up to date. Let us now talk about major research concerns in Hadoop programming

Ongoing Issues of Hadoop Programming

- Data localization and skew (Map and Reduce)

- HDFS enhancements and scheduling

- Speculative execution and straggler concerns

In general, we present conceptual and technical insights and examples to help our customers better understand all of the above mentioned issues in Hadoop programming. We provide them the ability to choose the methodology that is most suited to their research needs in this way. Let us now look into major terms in Hadoop.

Important Terminologies of Hadoop

- MapReduce

- Scheduling and flow of data

- Efficient allocation of resources

- Manipulating and storing data

- Storing and replication

- Cloud computing and storage

- Queries and random access

- DBMS and indexing

- Ecosystem

- HBase, pig, and hive

- Novel components

- Miscellaneous items

- Cryptography and data security

- Management of energy

Often, our experts assist our customers by providing detailed descriptions of all these terms. We also render full support in selecting suitable project topics, idea construction, integrating advances, selecting an algorithm, dealing with them, resolving challenges, project design improvement, prototypes, testing, and road mapping, along with many other things. We also guarantee that we will provide you with custom project support services to enable you to do the best research in every phase of the project’s progress. We will discuss some of the important tools and databases for the integration of Hadoop and big data

Different Hadoop Databases and Tools for Big Data processing

- TitanDB

- It is a distributed graph database that has the provisions for Cassandra and HBase

- Ganglia and Apache Ambari

- These are the monitoring databases

- RHadoop

- It is one of the important technologies that work based on Hadoop streaming

- R code is executed as reducer or mapper

- Reducer cannot be primarily used in interactive analysis while it is highly helpful in the offline analysis of batches

- SparkR is another important tool that is under development having the capability to be recognized as an attractive solution with high scalability

- SparkR produces more usefulness when it is integrated with zeppelin scaleR

- It is all done to assure that parallel computation is made using R programming and the things outside are kept simple

- Hi benchmark

- This tool is useful in Hadoop performance evaluation

- Spark GraphX

- It is used in the manipulation of graphs and to store them in HDFS

On our website, we’ve also covered the technical aspects of many Hadoop methodologies as mentioned above. You can use these tools in your project, or you can also come up with your ideas for which our experts are here to provide you with full support. We can help you create and implement any kind of creative and innovative approach. Let us now see about the improved Hadoop versions,

Improved Versions of Hadoop

- Hadoop Machine Learning and Twister

- Spark and HaLoop

- MapReduce online and iMapReduce

- Worker and aggregator structures

Big data analytics can be highly improvised using Spark in Hadoop. Performance is highly increased in the case of Apache Spark when compared to Hadoop about a hundred and ten times faster respectively when stored as cache in main memory and disc memory. This mechanism works based on a Hadoop-like system for or not reading from the disc. Machine learning libraries like ML and MLlib are also included in the spark.

We’re here to help you with all of the essential tools, methodologies, procedures, and operations. We are familiar with all of the project requirements of all of the world’s best institutions, so we can help you satisfy your organization’s needs effectively. What are the distributed learning algorithms for Hadoop?

Distributed Learning Algorithms for Hadoop

The following are some of the important aspects of distributed learning algorithms for Hadoop projects

- Several mappers and multiple reducers can be used to learn a particular model

- It can be used in learning several algorithms which involve large computation per data, multiple learning iterations and does not involve data transfer among titrations

- It is useful in learning typical algorithms in the following ways

- Statistical query model can be fit whether in less iteration (linear regression, K-means clustering, Naive Bayes, pair-wise similarity, and so on) or multiple iterations with high overheads (logistic regression and SVM, etc.)

- Dividing and conquering by mining frequent itemsets and approximate Matrix factorization and so on

Thus far, we’ve covered all of the prerequisites for working on Hadoop projects. Without a doubt, we can provide you with the most reliable online research and project assistance. Now let us look into some of the prominent algorithms involved in different aspects of any Hadoop projects

- Classification algorithms

- Random forest and logistic regression

- Naive Bayes and complementary Naive Bayes

- Clustering algorithms

- Spectral, Dirichlet process and mean-shift clustering

- Latent Dirichlet distribution and canopy

- K means and fuzzy k means

- Stochastic sequential gradient descent, parallel FP growth, and recommendations based on items

For many more algorithms, protocols, software packages, computer languages, and to process simulation you can contact us. We are experts in providing all aids to ensure seamless and personalized execution of these processes. Here are highly trained and renowned experts and analysts who can help you with code implementation, simulations, program writing, and many other aspects of your research. What is the top to Hadoop research topics?

Top 10 Interesting Hadoop Project Topics

- Apache Hive Web Data Mining

- Apache Hadoop Medical Data Mining

- Hadoop Wikipedia Page Rank

- Social Media Emotion Analysis

- Hadoop Clusters Scheduling Scheme

- Spark Unstructured Data Mining

- Reliability of Transactional Models

- Byzantine Fault-tolerance

- Least Timeliness Protocol Model

- Readiness & Reliability by CAP

Consult our technical team for any help on this list of Hadoop project topics that we are currently developing. We provide expert replies to any questions and queries based on concepts and clear any issues that may occur during the completion of your project. We can provide you with the most exciting specialist project guidance. We will now see the parameters used for the evaluation of Hadoop projects

QoS Parameters of Hadoop

- System performance

- Quantity of estimated and completed work concerning the requirements of time and resources

- Load balancing

- Load distribution over several computer resources is enhanced

- Scalability aspects

- Dynamicity of Hadoop cluster and its ability to be reformed according to the circumstances

- Heterogeneous nature

- The capacity is like the power of computation is different for different nodes leading to the heterogeneity of data centers

- Cost incurred

- Cost in terms of both manpower and money has to be made minimal

- Makespan

- The total time taken for completing a job from starting till the end is called makespan

- Fault tolerance

- Proper system functioning amid component failure

- Localisation of data

- Computation has to be moved near the data while the other way round is not usually recommended

All our projects have shown great results concerning these parameters. You may certainly contact our technical experts for technical notes and information on tools and methodologies for Hadoop. We will assist you in better understanding them by providing practical explanations based on real-time recent cases stated in major journals and benchmark references.

For the past fifteen years, we have provided trustworthy, strong research supervision and project support to world researchers and students from over 140 countries around the world through creative Hadoop project topics and concepts. As a result, we are competent at delivering effective Hadoop projects. We are well-known for providing confidential research assistance in all of the Hadoop research areas. We strongly advise you to visit our website for additional information about our successful projects.